Experimental vs Quasi-Experimental Design: Which to Choose?

Here’s a table that summarizes the similarities and differences between an experimental and a quasi-experimental study design:

What is a quasi-experimental design?

A quasi-experimental design is a non-randomized study design used to evaluate the effect of an intervention. The intervention can be a training program, a policy change or a medical treatment.

Unlike a true experiment, in a quasi-experimental study the choice of who gets the intervention and who doesn’t is not randomized. Instead, the intervention can be assigned to participants according to their choosing or that of the researcher, or by using any method other than randomness.

Having a control group is not required, but if present, it provides a higher level of evidence for the relationship between the intervention and the outcome.

(for more information, I recommend my other article: Understand Quasi-Experimental Design Through an Example ) .

Examples of quasi-experimental designs include:

- One-Group Posttest Only Design

- Static-Group Comparison Design

- One-Group Pretest-Posttest Design

- Separate-Sample Pretest-Posttest Design

What is an experimental design?

An experimental design is a randomized study design used to evaluate the effect of an intervention. In its simplest form, the participants will be randomly divided into 2 groups:

- A treatment group: where participants receive the new intervention which effect we want to study.

- A control or comparison group: where participants do not receive any intervention at all (or receive some standard intervention).

Randomization ensures that each participant has the same chance of receiving the intervention. Its objective is to equalize the 2 groups, and therefore, any observed difference in the study outcome afterwards will only be attributed to the intervention – i.e. it removes confounding.

(for more information, I recommend my other article: Purpose and Limitations of Random Assignment ).

Examples of experimental designs include:

- Posttest-Only Control Group Design

- Pretest-Posttest Control Group Design

- Solomon Four-Group Design

- Matched Pairs Design

- Randomized Block Design

When to choose an experimental design over a quasi-experimental design?

Although many statistical techniques can be used to deal with confounding in a quasi-experimental study, in practice, randomization is still the best tool we have to study causal relationships.

Another problem with quasi-experiments is the natural progression of the disease or the condition under study — When studying the effect of an intervention over time, one should consider natural changes because these can be mistaken with changes in outcome that are caused by the intervention. Having a well-chosen control group helps dealing with this issue.

So, if losing the element of randomness seems like an unwise step down in the hierarchy of evidence, why would we ever want to do it?

This is what we’re going to discuss next.

When to choose a quasi-experimental design over a true experiment?

The issue with randomness is that it cannot be always achievable.

So here are some cases where using a quasi-experimental design makes more sense than using an experimental one:

- If being in one group is believed to be harmful for the participants , either because the intervention is harmful (ex. randomizing people to smoking), or the intervention has a questionable efficacy, or on the contrary it is believed to be so beneficial that it would be malevolent to put people in the control group (ex. randomizing people to receiving an operation).

- In cases where interventions act on a group of people in a given location , it becomes difficult to adequately randomize subjects (ex. an intervention that reduces pollution in a given area).

- When working with small sample sizes , as randomized controlled trials require a large sample size to account for heterogeneity among subjects (i.e. to evenly distribute confounding variables between the intervention and control groups).

Further reading

- Statistical Software Popularity in 40,582 Research Papers

- Checking the Popularity of 125 Statistical Tests and Models

- Objectives of Epidemiology (With Examples)

- 12 Famous Epidemiologists and Why

5 Quasi-Experimental Design Examples

Dave Cornell (PhD)

Dr. Cornell has worked in education for more than 20 years. His work has involved designing teacher certification for Trinity College in London and in-service training for state governments in the United States. He has trained kindergarten teachers in 8 countries and helped businessmen and women open baby centers and kindergartens in 3 countries.

Learn about our Editorial Process

Chris Drew (PhD)

This article was peer-reviewed and edited by Chris Drew (PhD). The review process on Helpful Professor involves having a PhD level expert fact check, edit, and contribute to articles. Reviewers ensure all content reflects expert academic consensus and is backed up with reference to academic studies. Dr. Drew has published over 20 academic articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education and holds a PhD in Education from ACU.

Quasi-experimental design refers to a type of experimental design that uses pre-existing groups of people rather than random groups.

Because the groups of research participants already exist, they cannot be randomly assigned to a cohort . This makes inferring a causal relationship between the treatment and observed/criterion variable difficult.

Quasi-experimental designs are generally considered inferior to true experimental designs.

Limitations of Quasi-Experimental Design

Since participants cannot be randomly assigned to the grouping variable (male/female; high education/low education), the internal validity of the study is questionable.

Extraneous variables may exist that explain the results. For example, with quasi-experimental studies involving gender, there are numerous cultural and biological variables that distinguish males and females other than gender alone.

Each one of those variables may be able to explain the results without the need to refer to gender.

See More Research Limitations Here

Quasi-Experimental Design Examples

1. smartboard apps and math.

A school has decided to supplement their math resources with smartboard applications. The math teachers research the apps available and then choose two apps for each grade level. Before deciding on which apps to purchase, the school contacts the seller and asks for permission to demo/test the apps before purchasing the licenses.

The study involves having different teachers use the apps with their classes. Since there are two math teachers at each grade level, each teacher will use one of the apps in their classroom for three months. At the end of three months, all students will take the same math exams. Then the school can simply compare which app improved the students’ math scores the most.

The reason this is called a quasi-experiment is because the school did not randomly assign students to one app or the other. The students were already in pre-existing groups/classes.

Although it was impractical to randomly assign students to use one version or the other of the apps, it creates difficulty interpreting the results.

For instance, if students in teacher A’s class did better than the students in teacher B’s class, then can we really say the difference was due to the app? There may be other differences between the two teachers that account for the results. This poses a serious threat to the study’s internal validity.

2. Leadership Training

There is reason to believe that teaching entrepreneurs modern leadership techniques will improve their performance and shorten how long it takes for them to reach profitability. Team members will feel better appreciated and work harder, which should translate to increased productivity and innovation.

This hypothetical study took place in a third-world country in a mid-sized city. The researchers marketed the training throughout the city and received interest from 5 start-ups in the tech sector and 5 in the textile industry. The leaders of each company then attended six weeks of workshops on employee motivation, leadership styles, and effective team management.

At the end of one year, the researchers returned. They conducted a standard assessment of each start-up’s growth trajectory and administered various surveys to employees.

The results indicated that tech start-ups were further along in their growth paths than textile start-ups. The data also showed that tech work teams reported greater job satisfaction and company loyalty than textile work teams.

Although the results appear straightforward, because the researchers used a quasi-experimental design, they cannot say that the training caused the results.

The two groups may differ in ways that could explain the results. For instance, perhaps there is less growth potential in the textile industry in that city, or perhaps tech leaders are more progressive and willing to accept new leadership strategies.

3. Parenting Styles and Academic Performance

Psychologists are very interested in factors that affect children’s academic performance. Since parenting styles affect a variety of children’s social and emotional profiles, it stands to reason that it may affect academic performance as well. The four parenting styles under study are: authoritarian, authoritative, permissive, and neglectful/uninvolved.

To examine this possible relationship, researchers assessed the parenting style of 120 families with third graders in a large metropolitan city. Trained raters made two-hour home visits to conduct observations of parent/child interactions. That data was later compared with the children’s grades.

The results revealed that children raised in authoritative households had the highest grades of all the groups.

However, because the researchers were not able to randomly assign children to one of the four parenting styles, the internal validity is called into question.

There may be other explanations for the results other than parenting style. For instance, maybe parents that practice authoritative parenting also come from a higher SES demographic than the other parents.

Because they have higher income and education levels, they may put more emphasis on their child’s academic performance. Or, because they have greater financial resources, their children attend STEM camps, co-curricular and other extracurricular academic-orientated classes.

4. Government Reforms and Economic Impact

Government policies can have a tremendous impact on economic development. Making it easier for small businesses to open and reducing bank loans are examples of policies that can have immediate results. So, a third-world country decides to test policy reforms in two mid-sized cities. One city receives reforms directed at small businesses, while the other receives reforms directed at luring foreign investment.

The government was careful to choose two cities that were similar in terms of size and population demographics.

Over the next five years, economic growth data were collected at the end of each fiscal year. The measures consisted of housing sells, local GDP, and unemployment rates.

At the end of five years the results indicated that small business reforms had a much larger impact on economic growth than foreign investment. The city which received small business reforms saw an increase in housing sells and GDP, but a drop in unemployment. The other city saw stagnant sells and GDP, and a slight increase in unemployment.

On the surface, it appears that small business reform is the better way to go. However, a more careful analysis revealed that the economic improvement observed in the one city was actually the result of two multinational real estate firms entering the market. The two firms specialize in converting dilapidated warehouses into shopping centers and residential properties.

5. Gender and Meditation

Meditation can help relieve stress and reduce symptoms of depression and anxiety. It is a simple and easy to use technique that just about anyone can try. However, are the benefits real or is it just that people believe it can help? To find out, a team of counselors designed a study to put it to a test.

Since they believe that women are more likely to benefit than men, they recruit both males and females to be in their study.

Both groups were trained in meditation by a licensed professional. The training took place over three weekends. Participants were instructed to practice at home at least four times a week for the next three months and keep a journal each time they meditate.

At the end of the three months, physical and psychological health data were collected on all participants. For physical health, participants’ blood pressure was measured. For psychological health, participants filled out a happiness scale and the emotional tone of their diaries were examined.

The results showed that meditation worked better for women than men. Women had lower blood pressure, scored higher on the happiness scale, and wrote more positive statements in their diaries.

Unfortunately, the researchers noticed that men apparently did not actually practice meditation as much as they should. They had very few journal entries and in post-study interviews, a vast majority of men admitted that they only practiced meditation about half the time.

The lack of practice is an extraneous variable. Perhaps if men had adhered to the study instructions, their scores on the physical and psychological measures would have been higher than women’s measures.

The quasi-experiment is used when researchers want to study the effects of a variable/treatment on different groups of people. Groups can be defined based on gender, parenting style, SES demographics, or any number of other variables.

The problem is that when interpreting the results, even clear differences between the groups cannot be attributed to the treatment.

The groups may differ in ways other than the grouping variables. For example, leadership training in the study above may have improved the textile start-ups’ performance if the techniques had been applied at all. Similarly, men may have benefited from meditation as much as women, if they had just tried.

Baumrind, D. (1991). Parenting styles and adolescent development. In R. M. Lerner, A. C. Peterson, & J. Brooks-Gunn (Eds.), Encyclopedia of Adolescence (pp. 746–758). New York: Garland Publishing, Inc.

Cook, T. D., & Campbell, D. T. (1979). Quasi-experimentation: Design & analysis issues in field settings . Boston, MA: Houghton Mifflin.

Matthew L. Maciejewski (2020) Quasi-experimental design. Biostatistics & Epidemiology, 4 (1), 38-47. https://doi.org/10.1080/24709360.2018.1477468

Thyer, Bruce. (2012). Quasi-Experimental Research Designs . Oxford University Press. https://doi.org/10.1093/acprof:oso/9780195387384.001.0001

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 23 Achieved Status Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 25 Defense Mechanisms Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 15 Theory of Planned Behavior Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 18 Adaptive Behavior Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 23 Achieved Status Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 15 Ableism Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 25 Defense Mechanisms Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 15 Theory of Planned Behavior Examples

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Child Care and Early Education Research Connections

Experiments and quasi-experiments.

This page includes an explanation of the types, key components, validity, ethics, and advantages and disadvantages of experimental design.

An experiment is a study in which the researcher manipulates the level of some independent variable and then measures the outcome. Experiments are powerful techniques for evaluating cause-and-effect relationships. Many researchers consider experiments the "gold standard" against which all other research designs should be judged. Experiments are conducted both in the laboratory and in real life situations.

Types of Experimental Design

There are two basic types of research design:

- True experiments

- Quasi-experiments

The purpose of both is to examine the cause of certain phenomena.

True experiments, in which all the important factors that might affect the phenomena of interest are completely controlled, are the preferred design. Often, however, it is not possible or practical to control all the key factors, so it becomes necessary to implement a quasi-experimental research design.

Similarities between true and quasi-experiments:

- Study participants are subjected to some type of treatment or condition

- Some outcome of interest is measured

- The researchers test whether differences in this outcome are related to the treatment

Differences between true experiments and quasi-experiments:

- In a true experiment, participants are randomly assigned to either the treatment or the control group, whereas they are not assigned randomly in a quasi-experiment

- In a quasi-experiment, the control and treatment groups differ not only in terms of the experimental treatment they receive, but also in other, often unknown or unknowable, ways. Thus, the researcher must try to statistically control for as many of these differences as possible

- Because control is lacking in quasi-experiments, there may be several "rival hypotheses" competing with the experimental manipulation as explanations for observed results

Key Components of Experimental Research Design

The manipulation of predictor variables.

In an experiment, the researcher manipulates the factor that is hypothesized to affect the outcome of interest. The factor that is being manipulated is typically referred to as the treatment or intervention. The researcher may manipulate whether research subjects receive a treatment (e.g., antidepressant medicine: yes or no) and the level of treatment (e.g., 50 mg, 75 mg, 100 mg, and 125 mg).

Suppose, for example, a group of researchers was interested in the causes of maternal employment. They might hypothesize that the provision of government-subsidized child care would promote such employment. They could then design an experiment in which some subjects would be provided the option of government-funded child care subsidies and others would not. The researchers might also manipulate the value of the child care subsidies in order to determine if higher subsidy values might result in different levels of maternal employment.

Random Assignment

- Study participants are randomly assigned to different treatment groups

- All participants have the same chance of being in a given condition

- Participants are assigned to either the group that receives the treatment, known as the "experimental group" or "treatment group," or to the group which does not receive the treatment, referred to as the "control group"

- Random assignment neutralizes factors other than the independent and dependent variables, making it possible to directly infer cause and effect

Random Sampling

Traditionally, experimental researchers have used convenience sampling to select study participants. However, as research methods have become more rigorous, and the problems with generalizing from a convenience sample to the larger population have become more apparent, experimental researchers are increasingly turning to random sampling. In experimental policy research studies, participants are often randomly selected from program administrative databases and randomly assigned to the control or treatment groups.

Validity of Results

The two types of validity of experiments are internal and external. It is often difficult to achieve both in social science research experiments.

Internal Validity

- When an experiment is internally valid, we are certain that the independent variable (e.g., child care subsidies) caused the outcome of the study (e.g., maternal employment)

- When subjects are randomly assigned to treatment or control groups, we can assume that the independent variable caused the observed outcomes because the two groups should not have differed from one another at the start of the experiment

- For example, take the child care subsidy example above. Since research subjects were randomly assigned to the treatment (child care subsidies available) and control (no child care subsidies available) groups, the two groups should not have differed at the outset of the study. If, after the intervention, mothers in the treatment group were more likely to be working, we can assume that the availability of child care subsidies promoted maternal employment

One potential threat to internal validity in experiments occurs when participants either drop out of the study or refuse to participate in the study. If particular types of individuals drop out or refuse to participate more often than individuals with other characteristics, this is called differential attrition. For example, suppose an experiment was conducted to assess the effects of a new reading curriculum. If the new curriculum was so tough that many of the slowest readers dropped out of school, the school with the new curriculum would experience an increase in the average reading scores. The reason they experienced an increase in reading scores, however, is because the worst readers left the school, not because the new curriculum improved students' reading skills.

External Validity

- External validity is also of particular concern in social science experiments

- It can be very difficult to generalize experimental results to groups that were not included in the study

- Studies that randomly select participants from the most diverse and representative populations are more likely to have external validity

- The use of random sampling techniques makes it easier to generalize the results of studies to other groups

For example, a research study shows that a new curriculum improved reading comprehension of third-grade children in Iowa. To assess the study's external validity, you would ask whether this new curriculum would also be effective with third graders in New York or with children in other elementary grades.

Glossary terms related to validity:

- internal validity

- external validity

- differential attrition

It is particularly important in experimental research to follow ethical guidelines. Protecting the health and safety of research subjects is imperative. In order to assure subject safety, all researchers should have their project reviewed by the Institutional Review Boards (IRBS). The National Institutes of Health supplies strict guidelines for project approval. Many of these guidelines are based on the Belmont Report (pdf).

The basic ethical principles:

- Respect for persons -- requires that research subjects are not coerced into participating in a study and requires the protection of research subjects who have diminished autonomy

- Beneficence -- requires that experiments do not harm research subjects, and that researchers minimize the risks for subjects while maximizing the benefits for them

- Justice -- requires that all forms of differential treatment among research subjects be justified

Advantages and Disadvantages of Experimental Design

The environment in which the research takes place can often be carefully controlled. Consequently, it is easier to estimate the true effect of the variable of interest on the outcome of interest.

Disadvantages

It is often difficult to assure the external validity of the experiment, due to the frequently nonrandom selection processes and the artificial nature of the experimental context.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

7.3 Quasi-Experimental Research

Learning objectives.

- Explain what quasi-experimental research is and distinguish it clearly from both experimental and correlational research.

- Describe three different types of quasi-experimental research designs (nonequivalent groups, pretest-posttest, and interrupted time series) and identify examples of each one.

The prefix quasi means “resembling.” Thus quasi-experimental research is research that resembles experimental research but is not true experimental research. Although the independent variable is manipulated, participants are not randomly assigned to conditions or orders of conditions (Cook & Campbell, 1979). Because the independent variable is manipulated before the dependent variable is measured, quasi-experimental research eliminates the directionality problem. But because participants are not randomly assigned—making it likely that there are other differences between conditions—quasi-experimental research does not eliminate the problem of confounding variables. In terms of internal validity, therefore, quasi-experiments are generally somewhere between correlational studies and true experiments.

Quasi-experiments are most likely to be conducted in field settings in which random assignment is difficult or impossible. They are often conducted to evaluate the effectiveness of a treatment—perhaps a type of psychotherapy or an educational intervention. There are many different kinds of quasi-experiments, but we will discuss just a few of the most common ones here.

Nonequivalent Groups Design

Recall that when participants in a between-subjects experiment are randomly assigned to conditions, the resulting groups are likely to be quite similar. In fact, researchers consider them to be equivalent. When participants are not randomly assigned to conditions, however, the resulting groups are likely to be dissimilar in some ways. For this reason, researchers consider them to be nonequivalent. A nonequivalent groups design , then, is a between-subjects design in which participants have not been randomly assigned to conditions.

Imagine, for example, a researcher who wants to evaluate a new method of teaching fractions to third graders. One way would be to conduct a study with a treatment group consisting of one class of third-grade students and a control group consisting of another class of third-grade students. This would be a nonequivalent groups design because the students are not randomly assigned to classes by the researcher, which means there could be important differences between them. For example, the parents of higher achieving or more motivated students might have been more likely to request that their children be assigned to Ms. Williams’s class. Or the principal might have assigned the “troublemakers” to Mr. Jones’s class because he is a stronger disciplinarian. Of course, the teachers’ styles, and even the classroom environments, might be very different and might cause different levels of achievement or motivation among the students. If at the end of the study there was a difference in the two classes’ knowledge of fractions, it might have been caused by the difference between the teaching methods—but it might have been caused by any of these confounding variables.

Of course, researchers using a nonequivalent groups design can take steps to ensure that their groups are as similar as possible. In the present example, the researcher could try to select two classes at the same school, where the students in the two classes have similar scores on a standardized math test and the teachers are the same sex, are close in age, and have similar teaching styles. Taking such steps would increase the internal validity of the study because it would eliminate some of the most important confounding variables. But without true random assignment of the students to conditions, there remains the possibility of other important confounding variables that the researcher was not able to control.

Pretest-Posttest Design

In a pretest-posttest design , the dependent variable is measured once before the treatment is implemented and once after it is implemented. Imagine, for example, a researcher who is interested in the effectiveness of an antidrug education program on elementary school students’ attitudes toward illegal drugs. The researcher could measure the attitudes of students at a particular elementary school during one week, implement the antidrug program during the next week, and finally, measure their attitudes again the following week. The pretest-posttest design is much like a within-subjects experiment in which each participant is tested first under the control condition and then under the treatment condition. It is unlike a within-subjects experiment, however, in that the order of conditions is not counterbalanced because it typically is not possible for a participant to be tested in the treatment condition first and then in an “untreated” control condition.

If the average posttest score is better than the average pretest score, then it makes sense to conclude that the treatment might be responsible for the improvement. Unfortunately, one often cannot conclude this with a high degree of certainty because there may be other explanations for why the posttest scores are better. One category of alternative explanations goes under the name of history . Other things might have happened between the pretest and the posttest. Perhaps an antidrug program aired on television and many of the students watched it, or perhaps a celebrity died of a drug overdose and many of the students heard about it. Another category of alternative explanations goes under the name of maturation . Participants might have changed between the pretest and the posttest in ways that they were going to anyway because they are growing and learning. If it were a yearlong program, participants might become less impulsive or better reasoners and this might be responsible for the change.

Another alternative explanation for a change in the dependent variable in a pretest-posttest design is regression to the mean . This refers to the statistical fact that an individual who scores extremely on a variable on one occasion will tend to score less extremely on the next occasion. For example, a bowler with a long-term average of 150 who suddenly bowls a 220 will almost certainly score lower in the next game. Her score will “regress” toward her mean score of 150. Regression to the mean can be a problem when participants are selected for further study because of their extreme scores. Imagine, for example, that only students who scored especially low on a test of fractions are given a special training program and then retested. Regression to the mean all but guarantees that their scores will be higher even if the training program has no effect. A closely related concept—and an extremely important one in psychological research—is spontaneous remission . This is the tendency for many medical and psychological problems to improve over time without any form of treatment. The common cold is a good example. If one were to measure symptom severity in 100 common cold sufferers today, give them a bowl of chicken soup every day, and then measure their symptom severity again in a week, they would probably be much improved. This does not mean that the chicken soup was responsible for the improvement, however, because they would have been much improved without any treatment at all. The same is true of many psychological problems. A group of severely depressed people today is likely to be less depressed on average in 6 months. In reviewing the results of several studies of treatments for depression, researchers Michael Posternak and Ivan Miller found that participants in waitlist control conditions improved an average of 10 to 15% before they received any treatment at all (Posternak & Miller, 2001). Thus one must generally be very cautious about inferring causality from pretest-posttest designs.

Does Psychotherapy Work?

Early studies on the effectiveness of psychotherapy tended to use pretest-posttest designs. In a classic 1952 article, researcher Hans Eysenck summarized the results of 24 such studies showing that about two thirds of patients improved between the pretest and the posttest (Eysenck, 1952). But Eysenck also compared these results with archival data from state hospital and insurance company records showing that similar patients recovered at about the same rate without receiving psychotherapy. This suggested to Eysenck that the improvement that patients showed in the pretest-posttest studies might be no more than spontaneous remission. Note that Eysenck did not conclude that psychotherapy was ineffective. He merely concluded that there was no evidence that it was, and he wrote of “the necessity of properly planned and executed experimental studies into this important field” (p. 323). You can read the entire article here:

http://psychclassics.yorku.ca/Eysenck/psychotherapy.htm

Fortunately, many other researchers took up Eysenck’s challenge, and by 1980 hundreds of experiments had been conducted in which participants were randomly assigned to treatment and control conditions, and the results were summarized in a classic book by Mary Lee Smith, Gene Glass, and Thomas Miller (Smith, Glass, & Miller, 1980). They found that overall psychotherapy was quite effective, with about 80% of treatment participants improving more than the average control participant. Subsequent research has focused more on the conditions under which different types of psychotherapy are more or less effective.

In a classic 1952 article, researcher Hans Eysenck pointed out the shortcomings of the simple pretest-posttest design for evaluating the effectiveness of psychotherapy.

Wikimedia Commons – CC BY-SA 3.0.

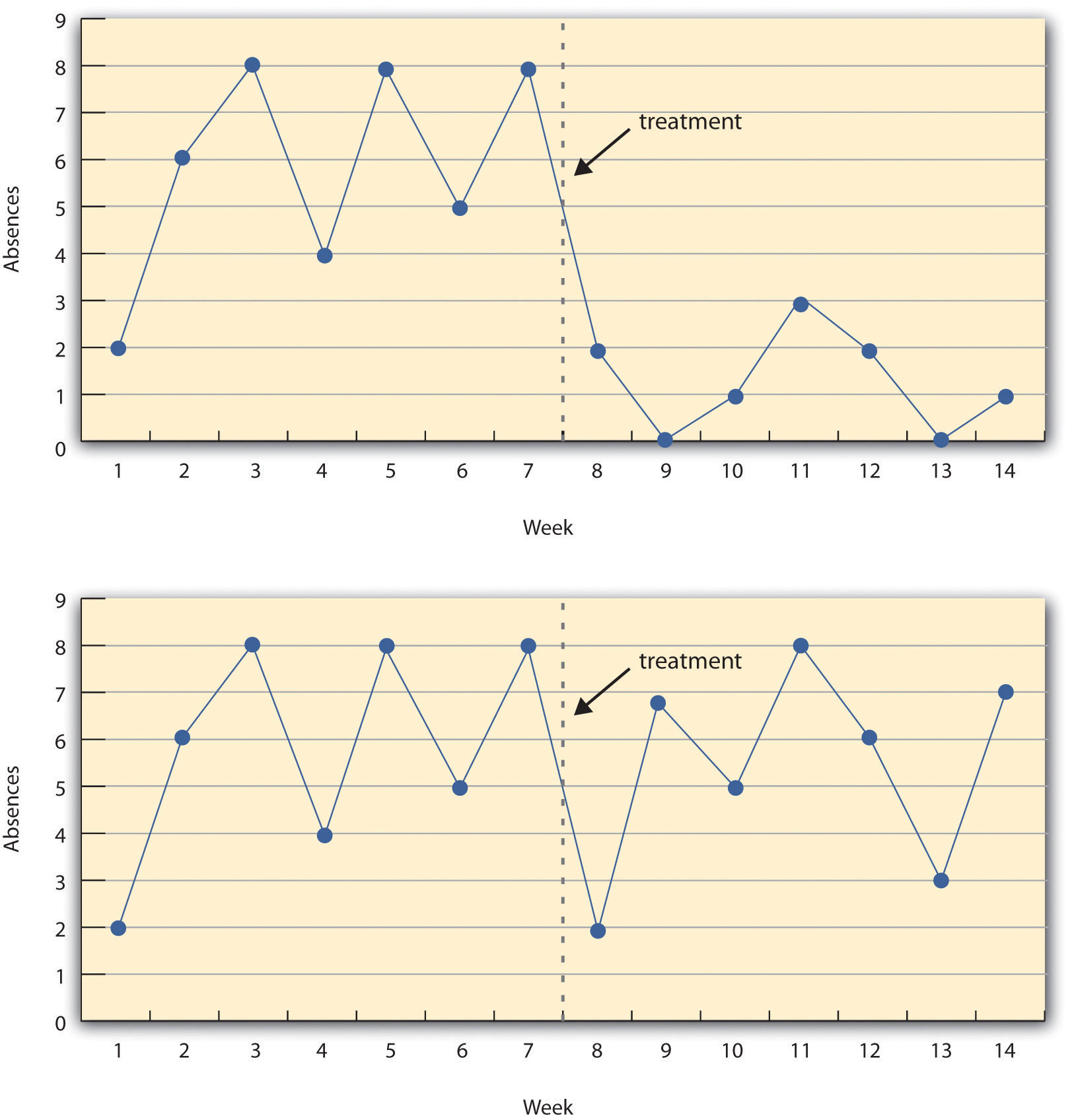

Interrupted Time Series Design

A variant of the pretest-posttest design is the interrupted time-series design . A time series is a set of measurements taken at intervals over a period of time. For example, a manufacturing company might measure its workers’ productivity each week for a year. In an interrupted time series-design, a time series like this is “interrupted” by a treatment. In one classic example, the treatment was the reduction of the work shifts in a factory from 10 hours to 8 hours (Cook & Campbell, 1979). Because productivity increased rather quickly after the shortening of the work shifts, and because it remained elevated for many months afterward, the researcher concluded that the shortening of the shifts caused the increase in productivity. Notice that the interrupted time-series design is like a pretest-posttest design in that it includes measurements of the dependent variable both before and after the treatment. It is unlike the pretest-posttest design, however, in that it includes multiple pretest and posttest measurements.

Figure 7.5 “A Hypothetical Interrupted Time-Series Design” shows data from a hypothetical interrupted time-series study. The dependent variable is the number of student absences per week in a research methods course. The treatment is that the instructor begins publicly taking attendance each day so that students know that the instructor is aware of who is present and who is absent. The top panel of Figure 7.5 “A Hypothetical Interrupted Time-Series Design” shows how the data might look if this treatment worked. There is a consistently high number of absences before the treatment, and there is an immediate and sustained drop in absences after the treatment. The bottom panel of Figure 7.5 “A Hypothetical Interrupted Time-Series Design” shows how the data might look if this treatment did not work. On average, the number of absences after the treatment is about the same as the number before. This figure also illustrates an advantage of the interrupted time-series design over a simpler pretest-posttest design. If there had been only one measurement of absences before the treatment at Week 7 and one afterward at Week 8, then it would have looked as though the treatment were responsible for the reduction. The multiple measurements both before and after the treatment suggest that the reduction between Weeks 7 and 8 is nothing more than normal week-to-week variation.

Figure 7.5 A Hypothetical Interrupted Time-Series Design

The top panel shows data that suggest that the treatment caused a reduction in absences. The bottom panel shows data that suggest that it did not.

Combination Designs

A type of quasi-experimental design that is generally better than either the nonequivalent groups design or the pretest-posttest design is one that combines elements of both. There is a treatment group that is given a pretest, receives a treatment, and then is given a posttest. But at the same time there is a control group that is given a pretest, does not receive the treatment, and then is given a posttest. The question, then, is not simply whether participants who receive the treatment improve but whether they improve more than participants who do not receive the treatment.

Imagine, for example, that students in one school are given a pretest on their attitudes toward drugs, then are exposed to an antidrug program, and finally are given a posttest. Students in a similar school are given the pretest, not exposed to an antidrug program, and finally are given a posttest. Again, if students in the treatment condition become more negative toward drugs, this could be an effect of the treatment, but it could also be a matter of history or maturation. If it really is an effect of the treatment, then students in the treatment condition should become more negative than students in the control condition. But if it is a matter of history (e.g., news of a celebrity drug overdose) or maturation (e.g., improved reasoning), then students in the two conditions would be likely to show similar amounts of change. This type of design does not completely eliminate the possibility of confounding variables, however. Something could occur at one of the schools but not the other (e.g., a student drug overdose), so students at the first school would be affected by it while students at the other school would not.

Finally, if participants in this kind of design are randomly assigned to conditions, it becomes a true experiment rather than a quasi experiment. In fact, it is the kind of experiment that Eysenck called for—and that has now been conducted many times—to demonstrate the effectiveness of psychotherapy.

Key Takeaways

- Quasi-experimental research involves the manipulation of an independent variable without the random assignment of participants to conditions or orders of conditions. Among the important types are nonequivalent groups designs, pretest-posttest, and interrupted time-series designs.

- Quasi-experimental research eliminates the directionality problem because it involves the manipulation of the independent variable. It does not eliminate the problem of confounding variables, however, because it does not involve random assignment to conditions. For these reasons, quasi-experimental research is generally higher in internal validity than correlational studies but lower than true experiments.

- Practice: Imagine that two college professors decide to test the effect of giving daily quizzes on student performance in a statistics course. They decide that Professor A will give quizzes but Professor B will not. They will then compare the performance of students in their two sections on a common final exam. List five other variables that might differ between the two sections that could affect the results.

Discussion: Imagine that a group of obese children is recruited for a study in which their weight is measured, then they participate for 3 months in a program that encourages them to be more active, and finally their weight is measured again. Explain how each of the following might affect the results:

- regression to the mean

- spontaneous remission

Cook, T. D., & Campbell, D. T. (1979). Quasi-experimentation: Design & analysis issues in field settings . Boston, MA: Houghton Mifflin.

Eysenck, H. J. (1952). The effects of psychotherapy: An evaluation. Journal of Consulting Psychology, 16 , 319–324.

Posternak, M. A., & Miller, I. (2001). Untreated short-term course of major depression: A meta-analysis of studies using outcomes from studies using wait-list control groups. Journal of Affective Disorders, 66 , 139–146.

Smith, M. L., Glass, G. V., & Miller, T. I. (1980). The benefits of psychotherapy . Baltimore, MD: Johns Hopkins University Press.

Research Methods in Psychology Copyright © 2016 by University of Minnesota is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Quasi-Experiment: Understand What It Is, Types & Examples

Discover the concept of quasi-experiment, its various types, real-world examples, and how QuestionPro aids in conducting these studies.

Quasi-experimental research designs have gained significant recognition in the scientific community due to their unique ability to study cause-and-effect relationships in real-world settings. Unlike true experiments, quasi-experiment lack random assignment of participants to groups, making them more practical and ethical in certain situations. In this article, we will delve into the concept, applications, and advantages of quasi-experiments, shedding light on their relevance and significance in the scientific realm.

What Is A Quasi-Experiment Research Design?

Quasi-experimental research designs are research methodologies that resemble true experiments but lack the randomized assignment of participants to groups. In a true experiment, researchers randomly assign participants to either an experimental group or a control group, allowing for a comparison of the effects of an independent variable on the dependent variable. However, in quasi-experiments, this random assignment is often not possible or ethically permissible, leading to the adoption of alternative strategies.

Types Of Quasi-Experimental Designs

There are several types of quasi-experiment designs to study causal relationships in specific contexts. Some common types include:

Non-Equivalent Groups Design

This design involves selecting pre-existing groups that differ in some key characteristics and comparing their responses to the independent variable. Although the researcher does not randomly assign the groups, they can still examine the effects of the independent variable.

Regression Discontinuity

This design utilizes a cutoff point or threshold to determine which participants receive the treatment or intervention. It assumes that participants on either side of the cutoff are similar in all other aspects, except for their exposure to the independent variable.

Interrupted Time Series Design

This design involves measuring the dependent variable multiple times before and after the introduction of an intervention or treatment. By comparing the trends in the dependent variable, researchers can infer the impact of the intervention.

Natural Experiments

Natural experiments take advantage of naturally occurring events or circumstances that mimic the random assignment found in true experiments. Participants are exposed to different conditions in situations identified by researchers without any manipulation from them.

Application of the Quasi-Experiment Design

Quasi-experimental research designs find applications in various fields, ranging from education to public health and beyond. One significant advantage of quasi-experiments is their feasibility in real-world settings where randomization is not always possible or ethical.

Ethical Reasons

Ethical concerns often arise in research when randomizing participants to different groups could potentially deny individuals access to beneficial treatments or interventions. In such cases, quasi-experimental designs provide an ethical alternative, allowing researchers to study the impact of interventions without depriving anyone of potential benefits.

Examples Of Quasi-Experimental Design

Let’s explore a few examples of quasi-experimental designs to understand their application in different contexts.

Design Of Non-Equivalent Groups

Determining the effectiveness of math apps in supplementing math classes.

Imagine a study aiming to determine the effectiveness of math apps in supplementing traditional math classes in a school. Randomly assigning students to different groups might be impractical or disrupt the existing classroom structure. Instead, researchers can select two comparable classes, one receiving the math app intervention and the other continuing with traditional teaching methods. By comparing the performance of the two groups, researchers can draw conclusions about the app’s effectiveness.

To conduct a quasi-experiment study like the one mentioned above, researchers can utilize QuestionPro , an advanced research platform that offers comprehensive survey and data analysis tools. With QuestionPro, researchers can design surveys to collect data, analyze results, and gain valuable insights for their quasi-experimental research.

How QuestionPro Helps In Quasi-Experimental Research?

QuestionPro’s powerful features, such as random assignment of participants, survey branching, and data visualization, enable researchers to efficiently conduct and analyze quasi-experimental studies. The platform provides a user-friendly interface and robust reporting capabilities, empowering researchers to gather data, explore relationships, and draw meaningful conclusions.

In some cases, researchers can leverage natural experiments to examine causal relationships.

Determining The Effectiveness Of Teaching Modern Leadership Techniques In Start-Up Businesses

Consider a study evaluating the effectiveness of teaching modern leadership techniques in start-up businesses. Instead of artificially assigning businesses to different groups, researchers can observe those that naturally adopt modern leadership techniques and compare their outcomes to those of businesses that have not implemented such practices.

Advantages and Disadvantages Of The Quasi-Experimental Design

Quasi-experimental designs offer several advantages over true experiments, making them valuable tools in research:

- Scope of the research : Quasi-experiments allow researchers to study cause-and-effect relationships in real-world settings, providing valuable insights into complex phenomena that may be challenging to replicate in a controlled laboratory environment.

- Regression Discontinuity : Researchers can utilize regression discontinuity to evaluate the effects of interventions or treatments when random assignment is not feasible. This design leverages existing data and naturally occurring thresholds to draw causal inferences.

Disadvantage

Lack of random assignment : Quasi-experimental designs lack the random assignment of participants, which introduces the possibility of confounding variables affecting the results. Researchers must carefully consider potential alternative explanations for observed effects.

What Are The Different Quasi-Experimental Study Designs?

Quasi-experimental designs encompass various approaches, including nonequivalent group designs, interrupted time series designs, and natural experiments. Each design offers unique advantages and limitations, providing researchers with versatile tools to explore causal relationships in different contexts.

Example Of The Natural Experiment Approach

Researchers interested in studying the impact of a public health campaign aimed at reducing smoking rates may take advantage of a natural experiment. By comparing smoking rates in a region that has implemented the campaign to a similar region that has not, researchers can examine the effectiveness of the intervention.

Differences Between Quasi-Experiments And True Experiments

Quasi-experiments and true experiments differ primarily in their ability to randomly assign participants to groups. While true experiments provide a higher level of control, quasi-experiments offer practical and ethical alternatives in situations where randomization is not feasible or desirable.

Example Comparing A True Experiment And Quasi-Experiment

In a true experiment investigating the effects of a new medication on a specific condition, researchers would randomly assign participants to either the experimental group, which receives the medication, or the control group, which receives a placebo. In a quasi-experiment, researchers might instead compare patients who voluntarily choose to take the medication to those who do not, examining the differences in outcomes between the two groups.

Quasi-Experiment: A Quick Wrap-Up

Quasi-experimental research designs play a vital role in scientific inquiry by allowing researchers to investigate cause-and-effect relationships in real-world settings. These designs offer practical and ethical alternatives to true experiments, making them valuable tools in various fields of study. With their versatility and applicability, quasi-experimental designs continue to contribute to our understanding of complex phenomena.

Turn Your Data Into Easy-To-Understand And Dynamic Stories

When you wish to explain any complex data, it’s always advised to break it down into simpler visuals or stories. This is where Mind the Graph comes in. It is a platform that helps researchers and scientists to turn their data into easy-to-understand and dynamic stories, helping the audience understand the concepts better. Sign Up now to explore the library of scientific infographics.

Subscribe to our newsletter

Exclusive high quality content about effective visual communication in science.

Content tags

IMAGES

COMMENTS

Quasi-experimental design is a research method that aims to establish a cause-and-effect relationship without random assignment. Learn about the differences, types and advantages of quasi-experiments compared to true experiments.

Learn the differences and similarities between experimental and quasi-experimental study designs, and when to use each one. Find out the advantages, limitations and examples of each design, and how to deal with confounding and randomization issues.

Learn what quasi-experimental design is and see five examples of studies that use it. Quasi-experimental design is a type of research that uses pre-existing groups of people rather than random groups, which makes it harder to infer causal relationships.

A quasi-experimental design is a type of research methodology. The best way to explain this approach is to understand the difference between experimental and quasi-experimental designs. As the name suggests, a quasi-experiment is almost a true experiment. The primary difference between the two is that researchers do not randomly select specific ...

Learn the difference between true experiments and quasi-experiments, the key components of experimental design, and the validity and ethics of experiments. Find out how experiments are used to evaluate cause-and-effect relationships in social science research.

Learn what quasi-experimental research is and how it differs from experimental and correlational research. Explore three types of quasi-experimental designs (nonequivalent groups, pretest-posttest, and interrupted time series) and their strengths and limitations.

Example of quasi-experimental design. The following example illustrates how quasi-experimental and true experimental designs differ. Quasi-experimental vs experimental design example. A school board wishes to explore whether a free school lunch program improves academic performance.. To explore this question using a true experimental design, researchers could randomly select students to ...

Learn how to plan and conduct quantitative research studies using different types of designs. This chapter covers the essential components, advantages, and disadvantages of experimental, quasi-experimental, and descriptive designs, with examples and tips.

Quasi-experiment is a research method that studies cause-and-effect relationships without random assignment of participants to groups. Learn about its types, applications, advantages, and examples, and how QuestionPro can help in conducting quasi-experiments.

A chapter from a book on applied linguistics research methods, outlining key features and examples of experimental and quasi-experimental designs. The chapter also discusses reliability, validity, and threats to internal and external validity in experimental research.